rsc's Diary: ELC-E 2018 - Day 3

This is my report from the 3rd day of Embedded Linux Conference Europe (ELC-E) 2018 in Edinburgh.

Keynote: Astronomy with Gravitational Waves

In the first talk of the morning, Dr. Alexander Nitz from MPI for Gravitational Physics talked about gravitational waves and the LIGO experiment. Let me note that the GEO600 detector (which was involved in the experiments and is part of a global network of gravitational wave detectors) is just 10 km away from the Pengutronix office in Hildesheim (and we have visited it with the crew some years ago).

Gravitational waves exist when masses rotate around each other, but unfortunately, the effect is pretty small, even if you try to observe collapsing black holes. The first actual detection with the LIGO detector in the USA happened back in 2015.

Another possibility to observe gravitational waves is the merger of neutron stars; in contrast to merging black holes, it has the advantage that, while merging, the system emits radiation, and in fact in 2017 it was possible to correlate a gamma ray burst with signals from the wave detectors.

During the time, seven events have been observed, so scientists begin to understand the characteristics of the signals and find out which kind of events they can be correlated with. All this is a good start, but for the future, scientists plan to extend the activities towards other parts of the spectrum.

Finally, data is processed with open source software in python, and in fact even the data itself is open data.

OpenOCD - Beyond Simple Software Debugging

My colleague Oleksij Rempel works on OpenOCD and JTAG, mainly on his hobby project reverse engineering unknown hardware, with a focus on MIPS based WiFi hardware for the freifunk project. JTAG is a pretty old technology, coming from the early 90es. Most users just use it for pushing software into a system; however, boundary scan is the more interesting part for him. Most chip vendors publish the BSDL files (which describe the boundary scan register), but some don't, or at least not to normal people, and in this case you need to guess what the bits might mean.

The first step of working with JTAG is to locate the JTAG port; however, for instance most Allwinner SoCs multiplex the JTAG contacts with the SD card signals and expose them only in a short time window at the beginning of the power-on sequence.

To hook in, a programmable power supply was used and an SD card multiplexer. If you attach some pull-up resistors to the right lines, it is possible to use a logic analyzer to find out the right pins. Once you have done that, playing with the bits starts in order to find out about the meaning: the chain can be scanned, and by adding pull-ups and pull-downs, the purpose of certain bits can be explored. However, it turned out that some chips are easier to be analyzed than others.

Another use case was to play with JTAG on i.MX6: when using boundary scan, the device goes into reset state. The chain does not only go to the actual processor, but also to components as the SDMA controller and the PCIe and SATA PHYs. At this point in time, only support for the main CPU is supported in OpenOCD, so there are many more challenges waiting for interested developers.

On this Rock I will Build my System - Why Open-Source Firmware Matters

In the last Pengutronix talk of this conference, my colleague Lucas Stach talked about why open source firmware in today's SoCs matters a lot. Working on lowlevel graphics tasks for industry projects, most of the devices he deals with have to be maintained for a very long time.

Traditionally, the firmware on those systems is really minimalistic: setting up the hardware, then fully passing control to the kernel. On a traditional system, there is basically no interaction between the kernel and the firmware at runtime. In this setup, the Linux kernel is in full control of what happens on the system, so the update story is quite easy: updating the kernel is what most people have at least thought about - in contrast to updating the firmware. With this model, the kernel is in control of everything, which contains a certain amount of complexity, but this complexity is there because the systems are complex, not because of the Linux kernel.

The moment things become complicated is when virtualization kicks in: in a virtualized system, none of the virtual machines shall directly talk to the hardware; this task is pushed to a hypervisor. As functionality shouldn't be split, PSCI was invented (the Power State Coordination Interface). On ARM, PSCI is a secure monitor call, and it makes bare metal kernel and virtualization look the same. However, central functionality has to be pushed into the secure monitor firmware. As it turned out that chip vendors started to implement things wrong, ARM started implementing PSCI in Trusted Firmware to make it right. However, as Trusted Firmware is BSD licensed, it makes it possible for the chip vendors to close the code down and have really central components of the system in closed code, with no options for kernel devs for improving things.

Experience shows that when the design of this hits real world, things happen that the developers didn't think of. For instance, Lucas has seen implementations where power domain bits for different processors have been in the same registers, without having an interlocking mechanism. Another example is the communication with an external power controller: in system bring-up, the firmware needs to talk to the PMIC via I2C, which should be under control of the kernel. So in fact, a lot of current hardware isn't designed to provide the separation required by PSCI.

In result, even more stuff is pushed down into firmware, by using the SCMI interface. It makes it possible to handle power, clocks, sensors and system control down in the firmware. Now the implementation in the kernel becomes easy, but firmware gets much more complex, and lots of runtime interactions between firmware and operating system become necessary. If you are now hunting down a system malfunction, you cannot look at a single code base any more. Even worse, the firmware part might be closed source, so things become really hard to fix.

Unfortunately, as soon as anyone cares about virtualization, the complexity cannot be ignored any more. Even worse, looking at more modern systems, SoCs are becoming more asymmetric and add management processors to the application processors. While it becomes more easy to offload functionality to the coprocessors, the need for more shared resources explodes: i.e. Linux is suddenly just one of the users of clock control. Vendors now start adding system control coprocessors for these central tasks, with all kinds of weird interfaces.

In conclusion, firmware is taking over more control over our systems; we should have a close look that the chip companies continue open sourcing their firmware code. There are some good examples out there, like Xilinx which opens up all their code, but we need to stay fully awake and try to push vendors into an open direction.

The GNSS Subsystem

After lunch, Johan Hovold talked about the newly merged GNSS kernel subsystem, dealing with Global Navigation Satellite Systems. So far, talking to GNSS receivers has been handled entirely in userspace, but this has changed recently. While the initialization and triangulation algorithms mainly happen inside the GNSS chipset, the devices usually incorporate an interface such as an UART or USB to connect to the SoC. On the receiver protocol side, NMEA0183 is the de-facto standard; however, proprietary vendor specific protocols are also around, and in some cases it is even possible to switch between both variants.

On Linux, gpsd is usually used in Linux userspace and deals with the serial or USB port and with power management. However, it turned out that this was not enough, especially when it comes to power state information: in some cases, this has to be handled inband, and in more sophisticated embedded scenarios it even had to handle serdev devices. This in combination with regulator, gpio and clock handling meant that something had to change. Nevertheless, pushing everything into the kernel was no solution as well, as for example some proprietary protocols cannot be implemented in the kernel. So a decision was made to keep the protocol handling in userspace and move the other mechanisms into the kernel.

The framework was merged into the 4.19 kernel released last Monday. It currently provides support for NMEA, SiRFstar and UBX style devices and provides a /dev/gnss0 interface. Power regulator issues are handled by devicetree specifications. One of the remaining issues that is not resolved yet is line speed handling and hotplugging, but things are being discussed.

In addition to standalone receivers, there are devices available that are integrated into modems, which offer more sophisticated features such as A-GPS or reduced-time-to-fix, or integration with the ofono stack; as it turned out that not everything could be done in the kernel, ugnss was invented which pushes part of the handling back into userspace.

For the future, features like integration with pulse per second devices, low-noise amplifiers, more ugnss support and a new highlevel interface stay to be solved.

IoT TLS: Why it is Hard

David Brown then talked about TLS in the Zephyr context: he found that none of the IoT examples around were secure. From a scientific perspective, IoT security is not different from normal IT security, but reality is different: as long as you talk about Raspberry Pis, it is a normal Linux, with OpenSSL etc. But what about really small and cheap systems? They might not even have enough memory for OpenSSL; and, of course, there are lots of "middle size" devices which might have enough memory, but it's hard.

On midsize devices, there might not even be a "real" kernel that implements the lower layers of the network stack. The TLS handshake consists of the device and the server agreeing on a cipher suite and exchange certificates; however, in contrast to "big" servers, once a certain cipher suite becomes insecure, the device might not even be able to talk to anyone any more, so one should make sure software can be updated. Another aspect is that the whole security comes from good randomness on the device. So when selecting CPUs for IoT devices, one should watch out for a model with a good hardware random number generator. If randomness is bad, solving the problem might go down from "more than the remaining lifetime of the universe" to "just a few minutes". And of course the certificate of the server you are talking to needs to be properly checked. Resources are pretty much constrained on embedded devices: they might just have something as 100 kB of memory; there might not be a good time source.

On the software side, existing TLS libraries such as OpenSSL are not designed for devices that use a single-threaded software pattern. Normally, TLS is integrated into the transportation layer, so it is mainly transparent to the application; however, on small devices, this abstraction doesn't fit: there is often a mainloop, so sending and receiving packets is done in the same loop.

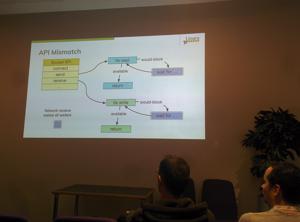

David looked at where Zephyr is now: while there still is Sockets+TLS support, the Zephyr network API changes towards ideas that fit the mainloop better; however, the work is not done yet, and the available patches are not merged yet. So sorry - at the moment, it stays hard.

A Sockets API for LoRa

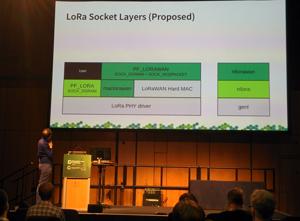

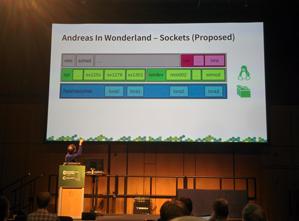

In the last talk of the day, Andreas Färber talked about LoRa, a low speed IoT protocol that works on license free ISM bands. When he started with his first hardware, there was a lot of lowlevel technology. The chipset he started with was equipped with a chipset without permanently stored register setting, and the MAC had to be done on the Linux side as well. A 2nd generation of modules had a microcontroller running an optionally certified MAC, so there was a chance of certifying the resulting device. Still, the question remained how to integrate all that into Linux.

The first attempt of attaching LoRa devices goes via userspace interfaces (SPI, serial, USB); however, this has several issues. The solutions are mostly vendor specific, there is no upstream community, the stacks have license issues, and many other tasks that should be done by a kernel are externalized to userspace. So the idea came up to move chipset drivers into the mainline kernel and encourage generic, community-maintained packet forwarders.

Following this idea, he started collecting requirements and implemented some code, following the ideas of the kernel's WiFi and 802.15.4 stacks. Unfortunately, in contrast to Ethernet based protocols, there is no good possibility to actually find out about the used upper layer protocol (as LoRAWAN); with that many differences between the hardware and software components of the stack, it stays challenging to find out the right abstractions for the kernel.

Further Readings

Girls' Day 2026

Unter dem Motto "Open Source - Open Future!" waren im Rahmen des Girls' Day am 23. April 2026 vier junge Frauen bei Pengutronix zu Gast. Zu Beginn gab es eine kurze Vorstellung von Pengutronix und natürlich die Antwort auf die Frage, was eigentlich dieses Embedded ist.

Pengutronix auf der embedded world 2026

Meet us at the embedded world 2026 in Nuremberg. Like every year you'll find us in hall 4, booth 4-261. As usual, we will be showing demonstrators on current topics at our exhibition stand. In addition, we are again inviting you to the RAUC and Labgrid community meetup.

Pengutronix at SPS in Nuremberg

After some years of absence, Pengutronix is back at the SPS 2025 in Nuremberg. You will find us in hall 6, booth 6-350C. We are looking forward to connecting with old and new friends, partners and customers. As usual, we will be showcasing demonstrators on current topics at our exhibition stand.